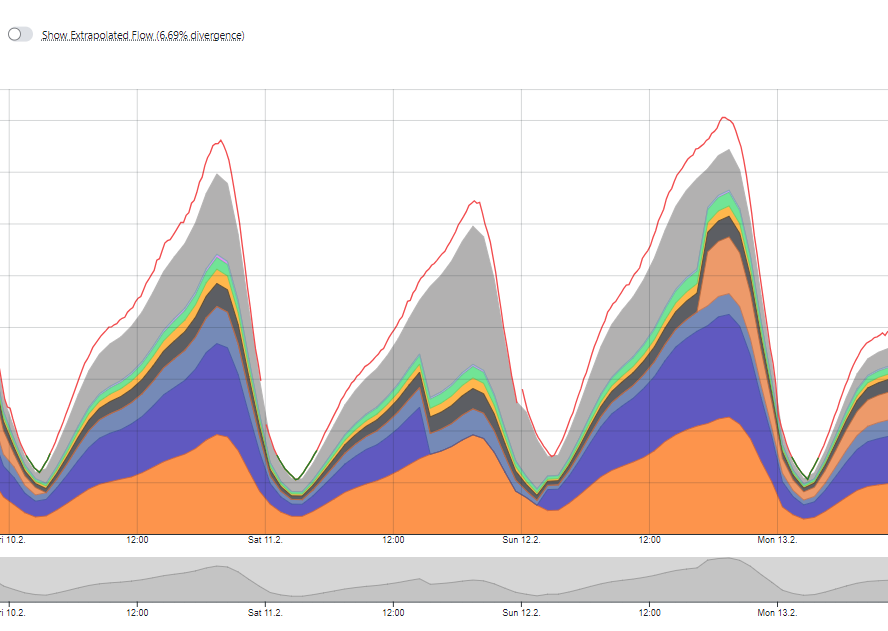

As the COVID-19 continues to spread globally at alarming rates, governments and large companies alike are taking great preventative measures to slow the spread of the disease, such as by cancelling large events, limiting travel, and forcing employees to work from home. These initiatives are moving a large influx of people into the digital realm at a rapid rate, which is stressing out networks and causing the quality of service to reduce. With so many people now relying on the internet for their livelihood during this pandemic, it is important for service providers to have control over traffic volumes in order to maintain their quality of service. For that, they need excellent network visibility and analytics.

Telecoms in Europe see surges in traffic

In just a few days’ time, countries such as France, Spain and Italy are already starting to see the effects that the new social restrictions are having on the network. Telecoms in Spain, for example, asked customers today to “adhere to some best practice in an attempt to maintain some sort of tolerable experience”. French telecoms, on the other hand, are starting to practice bandwidth discipline by limiting videos streaming sites such as Netflix and YouTube, in order to prioritize those working in home office.

On top of that, mobile networks are also taking a large hit with an increase in their network traffic as loved ones try to stay connected with one-another and colleagues try to collaborate via video calls, messaging apps, and regular telephony.

Network Analytics is important for QoS

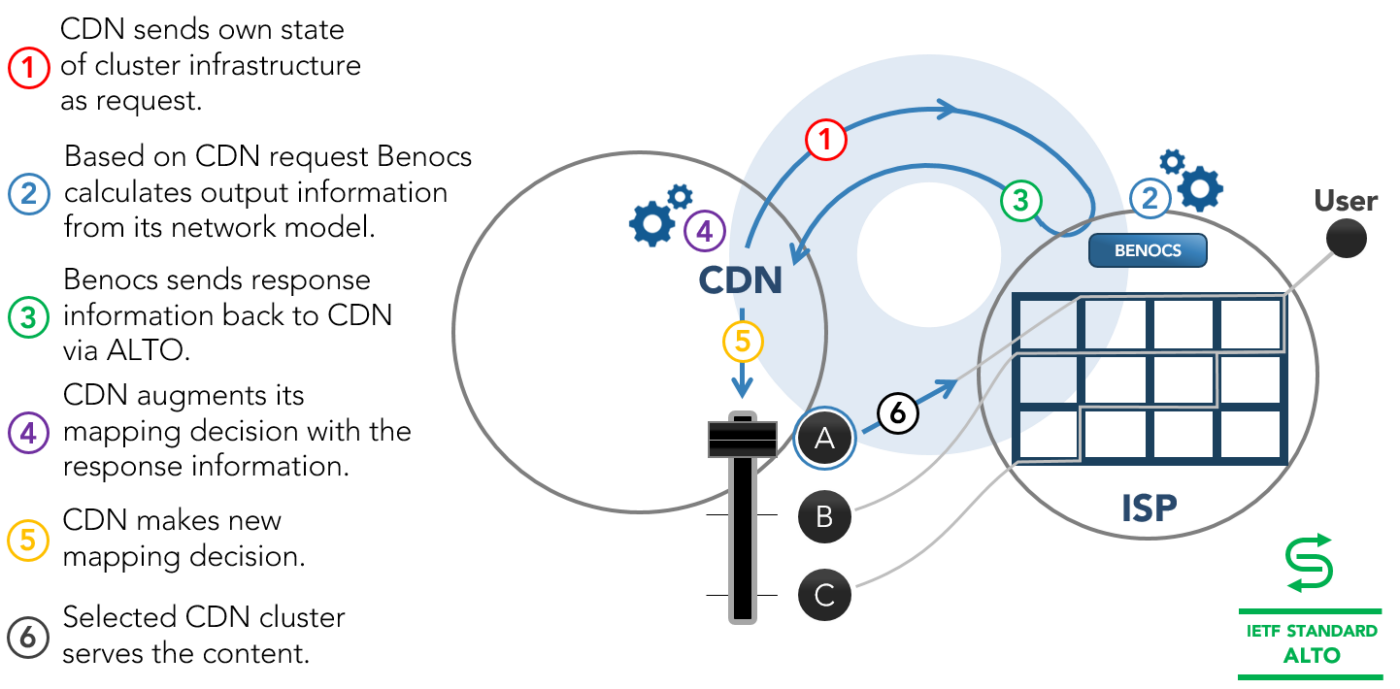

In order to cope with the sudden increase in traffic, while at the same time maintaining their quality of service for end-users, network operators need to understand their traffic. This includes: where traffic originates, where it terminates, what type of traffic is traveling on their network and how much. With this information, operators can work with OTTs on decreasing traffic volumes such as reducing video quality from HD to 480p or less during hours of high congestion. Therefore, still providing their services without reducing speed or connectivity.

During times of emergency, it is especially important that the network does not fail as more people turn to the digital realm for staying connected to work and loved ones. Therefore, it is necessary that network operators have access to important network insights for target network management. It is important for network operators to have network analytics.